Revolutionize your paper manufacturing with AI-driven optimization. Enhance efficiency, cut costs, and achieve autonomous operations like industry leaders Arjowiggins and Oji Paper. Embrace the future of smarter production!

Learn more

This white paper provides manufacturers with a foundational framework for building a data-driven organization with data storytelling tools and techniques.

With the dramatic increase of IoT devices, the rise of 5G, and the lower costs of data storage, Industrials are searching for efficient ways to optimize their data. They need data consumption management tools that can improve employee productivity and reduce costs, without a long ramp time.

Data Operations, better known as DataOps, is a concept that is growing in popularity within Industry. DataOps refers to a general method of structuring data so that it can be used across the enterprise. Unlike cloud computing or Big Data platforms, by definition, it assumes that data is democratized into a usable format through automation, without requiring technical team interference.

This article dives into some of the core benefits that manufacturers should consider when implementing DataOps into their strategy.

As manufacturers continue to mature their digital capabilities, they must consider how to integrate data management into their strategy. Data management is the general policy and practice that your organization sets to adhere to security protocols.

However, planning for how employees will use your data is often an overlooked step of this process. Practices such as DataOps ensure that your data management policies support your business needs. Without a structured strategy and tools to transform your raw data into valuable insights across your enterprise, you may fall into the trap of collecting data, yet fail to exploit it into savings.

Of course, manufacturers are known for generating Big Data, yet McKinsey notes that manufacturers have viewed data management “as a big-build waterfall IT project rather than a series of agile quick-cycle iterations, blocking progress downstream until the data are “perfect.”

Now, with a deeper focus on data democratization and self-service technologies, DataOps is a method that can drastically improve your operational efficiency, in turn generating savings, improved morale, and better product.

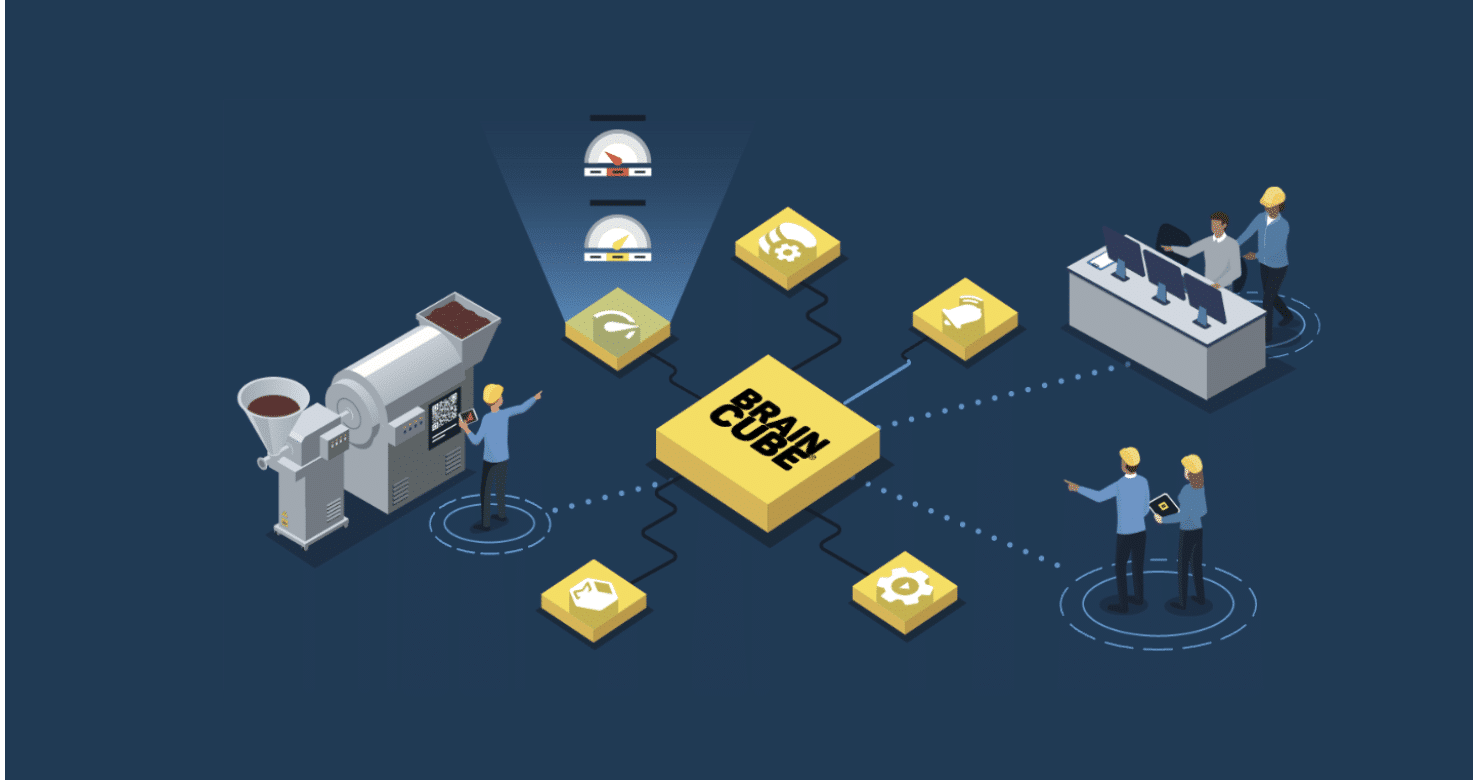

While DataOps is an overarching conceptual ideology on how people should be able to better use data, strategic efforts are often backed by software that can augment data delivery through integrations and automation.

DataOps is essentially creating routes and routines that drive data automatically, efficiently, and repeatedly across the organization using analytic code.

Automation is a significant component of DataOps to make the process more efficient and help data professionals save time and focus on higher-priority initiatives. Simply put—DataOps makes data more reliable and usable for your organization.

Manufacturers drastically vary in their stage of digital maturity. For those earlier in their journey, the focus centers on data collection through smart, connected assets and systems. Those that are farther along are focusing more efforts on scaling their use cases across the organization. Many industries are still challenged by systems that are different from plant to plant and, as such, rely on tools like public clouds and IIoT platforms to bring all of the various data sources into a centralized environment.

Regardless of where you sit from a maturity standpoint, an essential need for all organizations is data preparation. In this case, we’re not referring to data movement, though that’s certainly part of it, rather, we’re talking about the specific business use cases and value that can be templatized and scaled across the enterprise.

Today, many industries are up against scaling challenges because the established data infrastructures can no longer support increasing project complexity and volume. Expectations of end-to-end product traceability, predictive modeling, and other needs are pushing IT and data scientists to the limit. Citizen data scientists and developers are on the rise, but in the interim, manufacturers can act now to develop DataOps use cases that can scale.

For example, a food and beverage customer had limited visibility of their real-time production performance. They typically had to wait until the next shift or day to get their performance reports. From there, they could tweak and adapt their strategy moving forward. With Braincube, they connected to their assets to see live production data. By merely displaying data conditions on the shop floor, teams were able to improve yield by 6.5%, all in less than a month.

So often, we see digital transformation as a long and daunting journey, but realistically there are many solutions that can create high value on the way. As the pendulum continues to shift from a more technical focus to an end-user needs, or data-centric focus, we see more and more use cases that are implemented in days.

DataOps (and Braincube applications) aims to alleviate pain points by solving data challenges. How can you configure your data so that you have a continuous supply of meaningful data to all teams at all levels of the organization?

DataOps centers on how people can more efficiently use data. This means thinking through the benefits that the right data can provide, then orchestrating the structure of data collection, transformation, and access for teams. Where is data collected, and where does it need to go? How frequently and in what format?

There are many tools, such as sensors, connectors, and integrator software, that can be used to fast-track data mapping. Even though the technical capabilities exist, you still need to lead with strategy. It’s not only how to roll out data-centric culture, but you also need to consider the timing for training, new process documentation, and rollout. Many companies we work with develop a phased approach where they generate one-two powerful use cases that can then grow and expand across different sites or business units.

We’ve seen an increase in DataOps driven through the c-Suite. Leadership is looking to operationalize data in a timely, consistent, and repeatable manner. To do so, we think it’s important to look to trusted business-oriented software and services. Regardless of how you decide to implement DataOps at your organization, you want to think through how to align an agile, flexible approach to your current strategy. If you want help building that plan, we’re happy to help–book a demo with us below.

A Digital Twin in manufacturing is used to uncover the root cause, identify and prevent defects, optimize processes, and evolve predictive maintenance strategies. Still, all manufacturing Digital Twins are the same.

Advanced product traceability helps teams obtain Golden Batch consistently, pinpoint areas for improvement, and keep things running smoothly. Here are six challenges that you can overcome with traceability.

Using Braincube’s IIoT platform, Digital Twins, and Business Intelligence Applications, ofi’s team uncovered process optimizations using IIoT to improve customer satisfaction.